21 Resource Identity

Determining the identity of resources that belong in a domain, deciding which properties are important or relevant to the people or systems operating in that domain, and then specifying the principles by which those properties encapsulate or define the relationships among the resources are the essential tasks when building any organizing system. In organizing systems used by individuals or with small scope, the methods for doing these tasks are often ad hoc and unsystematic, and the organizing systems are therefore idiosyncratic and do not scale well. At the other extreme, organizing systems designed for institutional or industry-wide use, especially in information-intensive domains, require systematic design methods to determine which resources will have separate identities and how they are related to each other. These resources and their relationships are then described in conceptual models which guide the implementation of the systems that manage the resources and support interactions with them.[1]

Identity and Physical Resources

Our human visual and cognitive systems do a remarkable job at picking out objects from their backgrounds and distinguishing them from each other. In fact, we have little difficulty recognizing an object or a person even if we are seeing them from a novel distance and viewing angle or with different lighting, shading, and so on. When we watch a football game, we do not have any trouble perceiving the players moving around the field, and their contrasting uniform colors allow us to see that there are two different teams.

The perceptual mechanisms that make us see things as permanent objects with contrasting visible properties are just the prerequisite for the organizing tasks of identifying the specific object, determining the categories of objects to which it belongs, and deciding which of those categories is appropriate to emphasize. Most of the time we carry out these tasks in an automatic, unconscious way; at other times we make conscious decisions about them. For some purposes we consider a sports team as a single resource, as a collection of separate players for others, as offense and defense, as starters and reserves, and so on.[2]

Although we have many choices about how we can organize football players, all of them will include the concept of a single player as the smallest identifiable resource. We are never going to think of a football player as an intentional collection of separately identified leg, arm, head, and body resources because there are no other ways to “assemble” a human from body parts. Put more generally, there are some natural constraints on the organization of matter into parts or collections based on sizes, shapes, materials, and other properties that make us identify some things as indivisible resources in some domain.

Identity and Bibliographic Resources

Pondering the question of identity is something relatively recent in the world of librarians and catalogers. Libraries have been around for about 4000 years, but until the last few hundred years librarians created “bins” of headings and topics to organize resources without bothering to give each individual item a separate identifier or name. This meant searchers first had to make an educated guess as to which bin might house their desired information—“Histories”? “Medical and Chemical Philosophy”?—then scour everything in the category in a quest for their desired item. The choices were ad hoc and always local—that is, each cataloger decided the bins and groupings for each catalog.[3]

The first systematic approach to dealing with the concept of identity for bibliographic resources was developed by Antonio Panizzi at the British Museum in the mid-19th century. Panizzi wondered: How do we differentiate similar objects in a library catalog? His solution was a catalog organized by author name with an index of subjects, along with his newly concocted Rules for the Compilation of the Catalogue. This contained 91 rules about how to identify and arrange author names and titles and what to do with anonymous works. The rules were meant to codify how to differentiate and describe each singular resource in his library. Taken together, the rules serve to group all the different editions and versions of a work together under a single identity.[4]

The concept of identity for bibliographic resources was refined in the 1950s by Lubetzky, who enlarged the concept of the work to make it a more abstract idea of an author’s intellectual or artistic creation. According to Lubetzky’s principle, an audio book, a video recording of a play, and an electronic book should be listed each as distinct items, yet still linked to the original because of their overlapping intellectual origin.[5]

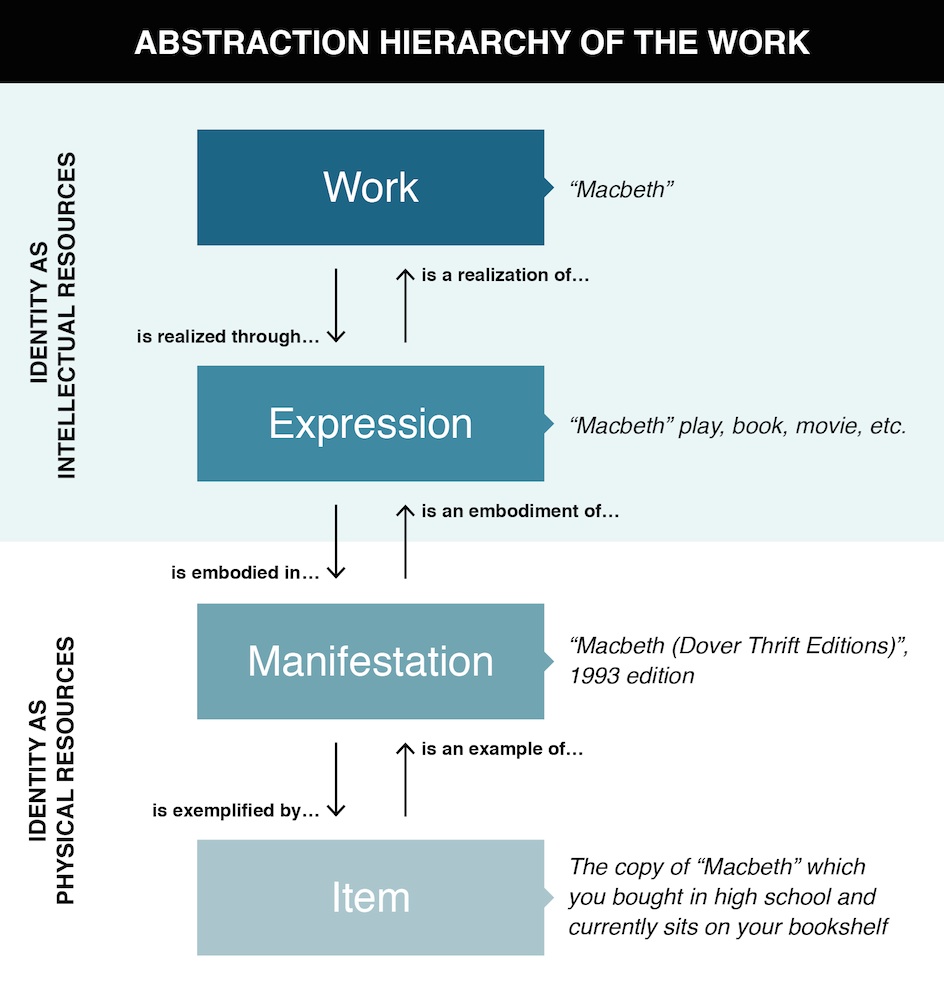

The distinctions put forth by Panizzi, Lubetzky, Svenonius and other library science theorists have evolved today into a four-step abstraction hierarchy (see Figure: The FRBR Abstraction Hierarchy.) between the abstract work, an expression in multiple formats or genres, a particular manifestation in one of those formats or genres, and a specific physical item. The broad scope from the abstract work to the specific item is essential because organizing systems in libraries must organize tangible artifacts while expressing the conceptual structure of the domains of knowledge represented in their collections.

This hierarchy is defined in the Functional Requirements for Bibliographical Records(FRBR), published as a standard by the International Federation of Library Associations and Institutions(IFLA).[6]

If we revisit the question “What is this thing we call Macbeth?” we can see how different ways of answering fit into this abstraction hierarchy. The most specific answer is that “ Macbeth” is a specific item, a very particular and individual resource, like that dog-eared paperback with yellow marked pages that you owned when you read Macbeth in high school. A more abstract answer is that Macbeth is an idealization called a work, a category that includes all the plays, movies, ballets, or other intellectual creations that share a recognizable amount of the plot and meaning from the original Shakespeare play.

The abstraction hierarchy for identifying resources yields four different answers about the identity of an information resource.

Identity and Information Components

In information-intensive domains, documents, databases, software applications, or other explicit repositories or sources of information are ubiquitous and essential to the creation of value for the user, reader, consumer, or customer. Value is created through the comparison, compilation, coordination or transformation of information in some chain or choreography of processes operating on information flowing from one information source or process to another. These processes are employed in accounting, financial services, procurement, logistics, supply chain management, insurance underwriting and claims processing, legal and professional services, customer support, computer programming, and energy management.

The processes that create value in information-intensive domains are “glued together” by shared information components that are exchanged in documents, records, messages, or resource descriptions of some kind. Information components are the primitive and abstract resources in information-intensive domains. They are the units of meaning that serve as building blocks of composite descriptions and other information artifacts.

The value creation processes in information-intensive domains work best when their component parts come from a common controlled vocabulary for components, or when each uses a vocabulary with a granularity and semantic precision compatible with the others. For example, the value created by a personal health record emerges when information from doctors, clinics, hospitals, and insurance companies can be combined because they all share the same “patient” component as a logical piece of information.

This abstract definition of information components does not help identify them, so we will introduce some heuristic criteria: An information component can be: (1) Any piece of information that has a unique label or identifier or (2) Any piece of information that is self-contained and comprehensible on its own.[7]

These two criteria for determining the identity of information components are often easy to satisfy through observations, interviews, and task analysis because people naturally use many different types of information and talk easily about specific components and the documents that contain them. Some common components (e.g., person, location, date, item) and familiar document types (e.g., report, catalog, calendar, receipt) can be identified in almost any domain. Other components need to be more precisely defined to meet the more specific semantic requirements of narrower domains. These smaller or more fine-grained components might be viewed as refined or qualified versions of the generic components and document types, like course grade and semester components in academic transcripts, airport codes and flight numbers in travel itineraries and tickets, and drug names and dosages in prescriptions.

Decades of practical and theoretical effort in conceptual modeling, relational theory, and database design have resulted in rigorous methods for identifying information components when requirements and business rules for information can be precisely specified. For example, in the domain of business transactions, required information like item numbers, quantities, prices, payment information, and so on must be encoded as a particular type of data—integer, decimal, Unicode string, etc.— with clearly defined possible values and that follows clear occurrence rules.[8]

Identifying components can seem superficially easy at the transactional end of the Document Type Spectrum (see the sidebar in “Resource Domain”), with orders or invoices, forms requiring data entry, or other highly-structured document types like product catalogs, where pieces of information are typically labeled and delimited by boxes, lines, white space or other presentation features that encode the distinctions between types of content. For example, the presence of ITEM, CUSTOMER NAME, ADDRESS, and PAYMENT INFORMATION labels on the fields of an online order form suggests these pieces of information are semantically distinct components in a retail application. In addition, these labels might have analogues in variable names in the source code that implements the order form, or as tags in a XML document created by the ordering application; <CustName>John Smith</CustName> and <Item>A-19</Item> in the order document can be easily identified when it is sent to the other services by the order management application.

But the theoretically grounded methods for identifying components like those of relational theory and normalization that work for structured data do not strictly apply when information requirements are more qualitative and less precise at the narrative end of the Document Type Spectrum. These information requirements are typical of narrative, unstructured and semi-structured types of documents, and information sources like those often found in law, education, and professional services. Narrative documents include technical publications, reports, policies, procedures and other less structured information, where semantic components are rarely labeled explicitly and are often surrounded by text that is more generic. Unlike transactional documents that depend on precise semantics because they are used by computers, narrative documents are used by people, who can ask if they are not sure what something means, so there is less need to explicitly define the meaning of the information components. Occasional exceptions, such as where components in narrative documents are identified with explicit labels like NOTE and WARNING, only prove the rule.

Identity and Active Resources

Active resources (“Use Controlled Vocabularies”) initiate effects or create value on their own. In many cases an inherently passive physical resource like a product package or shipping pallet is transformed into an active one when associated with an RFID tag or bar code. Mobile phones contain device or subscriber IDs so that any information they communicate can be associated both with the phone and often, through indirect reference, with a particular person. If the resource has an IP address, it is said to be part of the “Internet of Things.”[9]

Organizing systems that create value from active resources often co-exist with or complement organizing systems that treat its resources as passive. In a traditional library, books sat passively on shelves and required users to read their spines to identify them. Today, some library books contain active RFID tags that make them dynamic information sources that self-identify by publishing their own locations. Similarly, a supermarket or department store might organize its goods as physical resources on shelves, treating them as passive resources; superimposed on that traditional organizing system is one that uses point-of-sale transaction information created when items are scanned at checkout counters to automatically re-order goods and replenish the inventory at the store where they were sold. In some stores the shelves contain sensors that continually “talk to the goods” and the information they gather can maintain inventory levels and even help prevent theft of valuable merchandise by tracking goods through a store or warehouse. The inventory becomes a collection of active resources; each item eager to announce its own location and ready to conduct its own sale. Another category of inanimate objects that are active resources are those that use Twitter to communicate their status or sensor measurements. These include bridges, rivers, and the Curiosity Rover on Mars.

The extent to which an active resource is “smart” depends on how much computing capability it has available to refine the data it collects and communicates. A large collection of sensors can transmit a torrent of captured data that requires substantial processing to distinguish significant events from those that reflect normal operation, and also from those that are statistical outliers with strange values caused by random noise. This challenge gets qualitatively more difficult as the amount of data grows to big data size, because a one in million event might be a statistical outlier that can be ignored, but if there are a thousand similar outliers in a billion sensor readings, this cluster of data probably reveals something important. On the other hand, giving every sensor the computing capability to refine its data so that it only communicates significant information might make the sensors too expensive to deploy.[10]

-

These methods go by different names in different disciplines, including “data modeling,” “systems analysis,” and “document engineering” (e.g., (Kent 2012), (Silverston 2000), (Glushko and McGrath 2005). What they have in common is that they produce conceptual models of a domain that specify their components or parts and the relationships among these components or parts. These conceptual models are called “schemas” or “domain ontologies” in some modeling approaches, and are typically implemented in models that are optimized for particular technologies or applications.

-

Specifically, an NFL football team needs to be considered a single resource for games through the season and in playoffs, and 53 individual players for other situations, like the NFL draft or play-calling. The team and the team’s roster can be thought of as resources, and the team’s individual players are also resources that make up the whole team.

-

(Denton 2007) is a highly readable retelling of the history of cataloging that follows four themes—the use of axioms, user requirements, the work, and standardization and internationalization—culminating with their synthesis in the Functional Requirements for Bibliographic Records(FRBR).

-

This was a surprisingly controversial activity. Many opposed Panizzi’s efforts as a waste of time and effort because they assumed that “building a catalog was a simple matter of writing down a list of titles”(Denton 2007, p. 38).

-

Seymour Lubetzky worked for the US Library of Congress from 1943-1960 where he tirelessly sought to simplify the proliferating mass of special case cataloging rules proposed by the American Library Association, because at the time the LOC had the task of applying those rules and making the catalog cards other libraries used. Lubetzky’s book on Cataloguing Rules and Principles (Lubetzky 1953) bluntly asks “Is this rule necessary?” and was a turning point in cataloging.

-

In between the abstraction of the work and the specific single item are two additional levels in the FRBR abstraction hierarchy. An expression denotes the multiple the multiple realizations of a work in some particular medium or notation, where it can actually be perceived. There are many editions and translations of Macbeth, but they are all the same expression, and they are a different expression from all of the film adaptations of Macbeth. A manifestation is the set of physical artifacts with the same expression. All of the copies of the Folger Library print edition of Macbeth are the same manifestation.

-

This kind of advice can be found in many data or conceptual modeling texts, but this particular statement comes from (Glushko, Weaver, Coonan, and Lincoln 1988). Similar advice can also be found in the information science literature: “A unit of information...would have to be...correctly interpretable outside any context” (Wilson 1968, p. 18).

-

A group of techniques collectively called “normalization” produces a set of tightly defined information components that have minimal redundancy and ambiguity. Imagine that a business keeps information about customer orders using a “spreadsheet” style of organization in which a row contains cells that record the date, order number, customer name, customer address, item ID, item description, quantity, unit price, and total price. If an order contains multiple products, these would be recorded on additional rows, as would subsequent orders from the same customer. All of this information is important to the business, but this way of organizing it has a great deal of redundancy and inefficiency. For example, the customer address recurs in every order, and the customer address field merges street, city, state and zip code into a large unstructured field rather than separating them as atomic components of different types of information with potentially varying uses. Similar redundancy exists for the products and prices. Canceling an order might result in the business deleting all the information it has about a particular customer or product.

Normalization divides this large body of information into four separate tables, one for customers, one for customer orders, one for the items contained in each order, and one for item information. This normalized information model encodes all of the information in the “spreadsheet style” model, but eliminates the redundancy and avoids the data integrity problems that are inherent in it.

Normalization is taught in every database design course. The concept and methods were proposed by (Codd 1970), who invented the relational data model, and has been taught to students in numerous database design textbooks like (Date 2003).

-

The “Internet of Things” concept spread very quickly after it was proposed in 1999 by Kevin Ashton, who co-founded the Auto-ID center at MIT that year to standardize RFID and sensor information. For a popular introduction, see (Gershenfeld, Krikorian, and Cohen 2004). For a recent technical survey and a taxonomy of application domains and scenarios see (Atzori, Iera, and Morabito 2010).

-

Pattern analysis can help escape this dilemma by enabling predictive modeling to make optimal use of the data. In designing smart things and devices for people, it is helpful to create a smart model in order to predict the kinds of patterns and locations relevant to the data collected or monitored. These allow designers to develop a set of dimensions and principles that will act as smart guides for the development of smart things. Modeling helps to enable automation, security, or energy efficiency, and baseline models can be used to detect anomalies. As for location, exact locations are unnecessary; use of a “symbolic space” to represent each “sensing zone”—e.g., rooms in a house—and an individual’s movement history as a string of symbols—e.g., abcdegia—works sufficiently as a model of prediction. See (Das et al. 2002).